AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

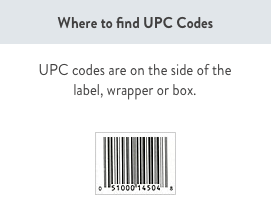

Berkeley upc code4/27/2023 When the scanner at the checkout line scans a product, the cash register sends the UPC number to the store's central POS (point of sale) computer to look up the UPC number.As you can see, there is no price information encoded in a bar code.In: Proceedings of the 17th Annual International Conference on Supercomputing, San Francisco, CA, USA, June 23-26 (2003)īauer, M., Clark, J., Schkufza, E., Aiken, A. Husbands, P., Iancu, C., Yelick, K.: A performance analysis of the Berkeley UPC compiler. In: Proceedings of the 2006 ACM SIGPLAN Conference on Programming Language Design and Implementation, Ottawa, Ontario, Canada, June 11-14 (2006) In: The 3rd Conference on Partitioned Global Address Space Programming Models, Virginia, USA (October 2009)īarton, C., Casçaval, C., Almási, G., Zheng, Y., Farreras, M., Chatterje, S., Amaral, J.N.: Shared memory programming for large scale machines. In: The 3rd Conference on Partitioned Global Address Space Programming Models, Virginia, USA (October 2009) Serres, O., Kayi, A., Anbar, A., El-Ghazawi, T.: A UPC Specification Extension Proposal for Hierarchical Parallelism. In: The ACM SIGNPLAN 2010 Conference on Programming Language Design and Implementation, PLDI 2010 (June 2010) Yang, Y., Xiang, P., Kong, J., Zhou, H.: A GPGPU Compiler for Memory Optimization and Parallelism Management. NVIDIA CUDA, China campus programming contest (2009), PGI Fortran & C Accelerator Programming Model (March 2010), In: ACM SIGPLAN Symposium on Principles and Practice of Parallel Programming (PPoPP), pp.

Lee, S., Min, S.-J., Eigenmann, R.: OpenMP to GPGPU: a compiler framework for automatic translation and optimization.

Yan, Y., et al.: JCUDA: a Programmer-Friendly Interface for Accelerating Java Programs with CUDA. In: Workshop on General Purpose Processing on Graphics Processing Units (GPGPU), pp. Han, T.D., Abdelrahman, T.S.: hiCUDA: a high-level directive-based language for GPU programming. Ueng, S., Lathara, M., Baghsorkhi, S.S., Hwu, W.W.: CUDA-lite: Reducing GPU programming complexity. In: Proceedings of the 13th ACM SIGPLAN Symposium on Principles and Practice of Parallel Programming, PPoPP 2008 (2008) Nishtala, R., Almasi, G., Cascaval, C.: Performance without pain = productivity: data layout and collective communication in UPC. Springer, Heidelberg (2010)īikshandi, G., Guo, J., Hoeflinger, D., Almasi, G., Fraguela, B.B., Garzarán, M.J., Padua, D., von Praun, C.: Programming for parallelism and locality with hierarchically tiled arrays. In: Gao, G.R., Pollock, L.L., Cavazos, J., Li, X. Yan, Y., Zhao, J., Guo, Y., Sarkar, V.: Hierarchical place trees: A portable abstraction for task parallelism and data movement. In: Proceedings of Supercomputing 2006 (November 2006) This process is experimental and the keywords may be updated as the learning algorithm improves.įatahalian, K., Knight, T., Houston, M., Erez, M., Horn, D., Leem, L., Park, H., Ren, M., Aiken, A., Dally, W., Hanrahan, P.: Sequoia: Programming the Memory Hierarchy. These keywords were added by machine and not by the authors. We also demonstrate that the integrated compile-time and runtime optimization is effective to achieve good performance on GPU clusters. The experimental results show that the UPC extension has better programmability than the mixed MPI/CUDA approach. We also put forward unified data management for each UPC thread to optimize data transfer and memory layout for separate memory modules of CPUs and GPUs. We implement the compiling system, including affinity-aware loop tiling, GPU code generation, and several memory optimizations targeting NVIDIA CUDA.

We extend UPC with hierarchical data distribution, revise the execution model of UPC to mix SPMD with fork-join execution model, and modify the semantics of upc_forall to reflect the data-thread affinity on a thread hierarchy. With General-purpose graphics processing units (GPUs) becoming an increasingly important high performance computing platform, we propose new language extensions to UPC to take advantage of GPU clusters. Unified Parallel C (UPC), a parallel extension to ANSI C, is designed for high performance computing on large-scale parallel machines.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed